#LLM

9 posts

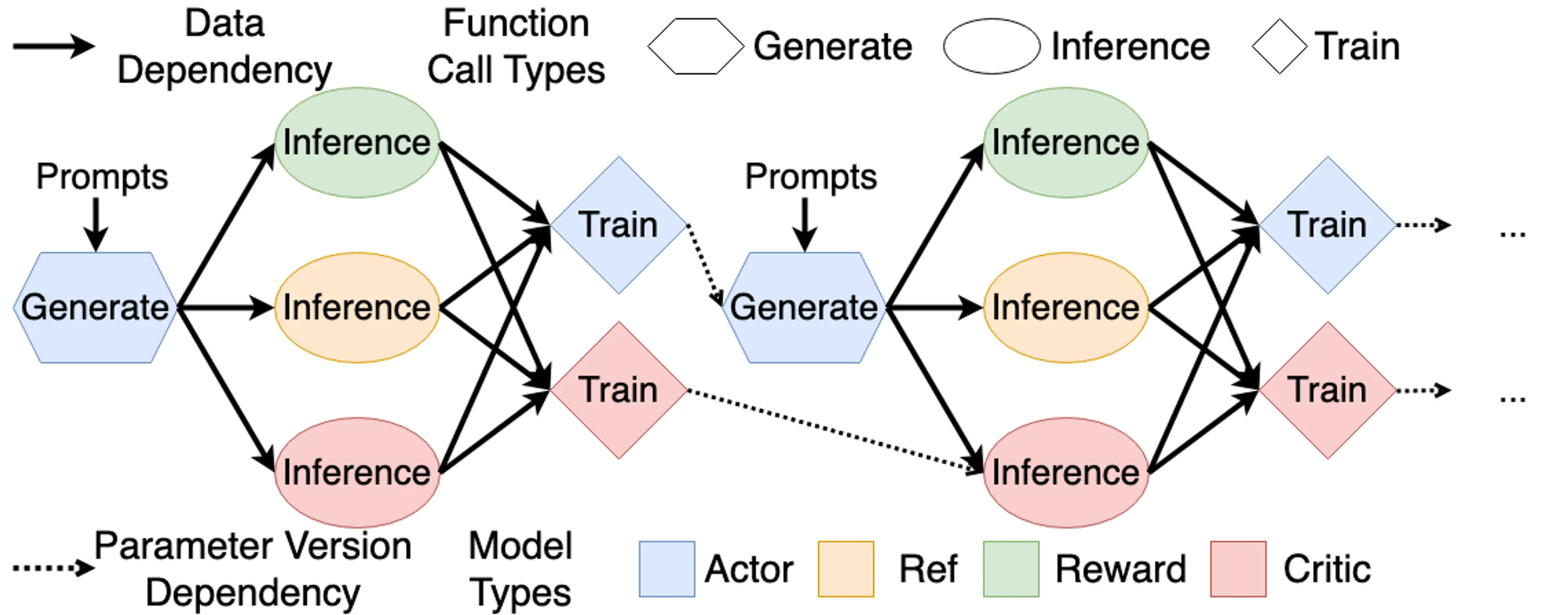

From RL to RLHF

This article is primarily based on Umar Jamil's course for learning and recording purposes. Our goal is to align LLM behavior with our desired outputs, and RLHF is one of the most famous techniques for this.

Implementing Simple LLM Inference in Rust

I stumbled upon the 'Large Model and AI System Training Camp' hosted by Tsinghua University on Bilibili and signed up immediately. I planned to use the Spring Festival holiday to consolidate my theoretical knowledge of LLM Inference through practice. Coincidentally, the school VPN was down, preventing me from doing research, so it was the perfect time to organize my study notes.

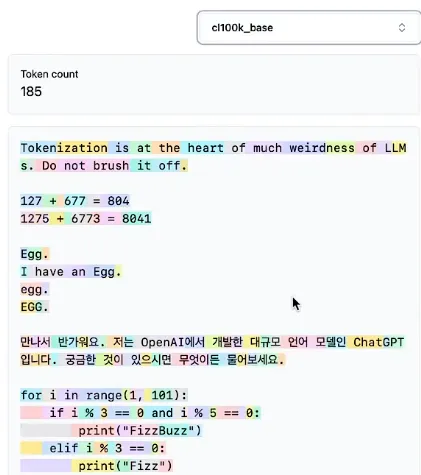

History of LLM Evolution (6): Unveiling the Mystery of Tokenizers

Deeply understand how tokenizers work, learning about the BPE algorithm, the tokenization strategies of the GPT series, and implementation details of SentencePiece.

History of LLM Evolution (5): Building the Path of Self-Attention — The Future of Language Models from Transformer to GPT

Building the Transformer architecture from scratch, deeply understanding core components like self-attention, multi-head attention, residual connections, and layer normalization.

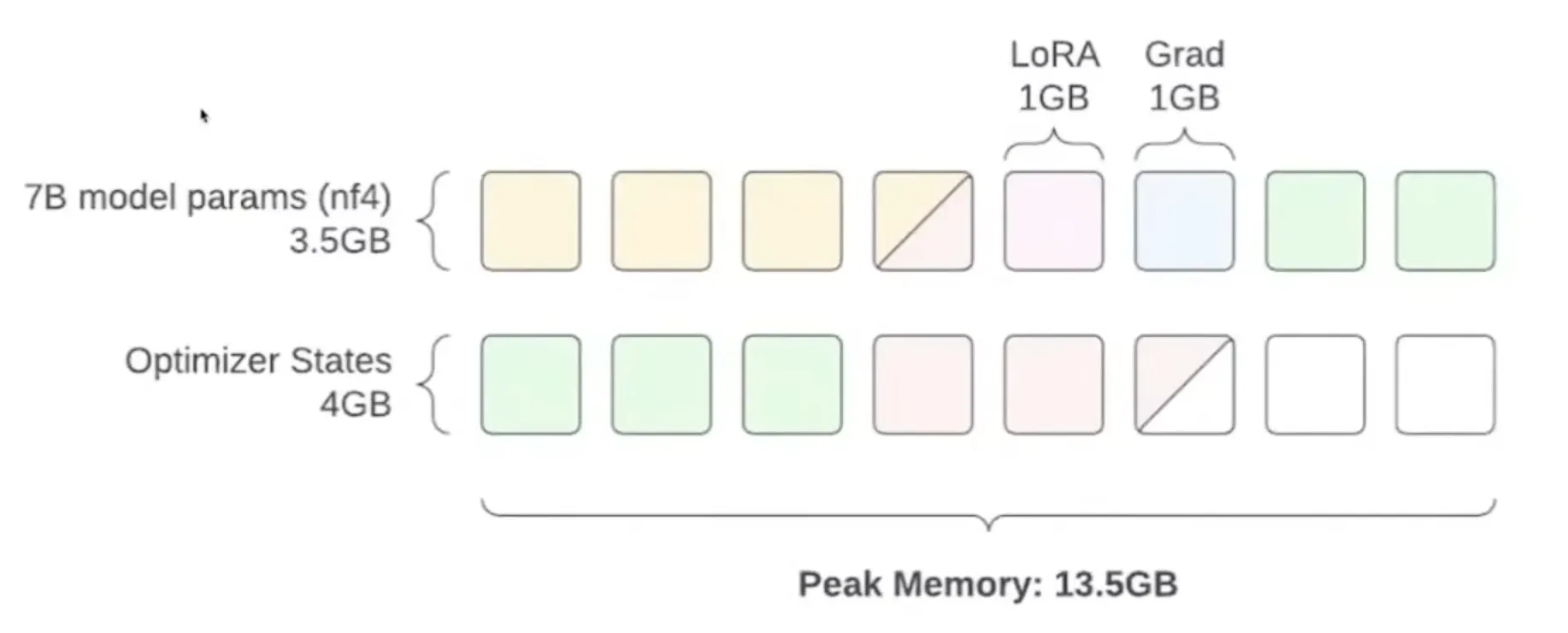

The Way of Fine-Tuning

Learn how to fine-tune large language models under limited VRAM conditions, mastering key techniques like half-precision, quantization, LoRA, and QLoRA.

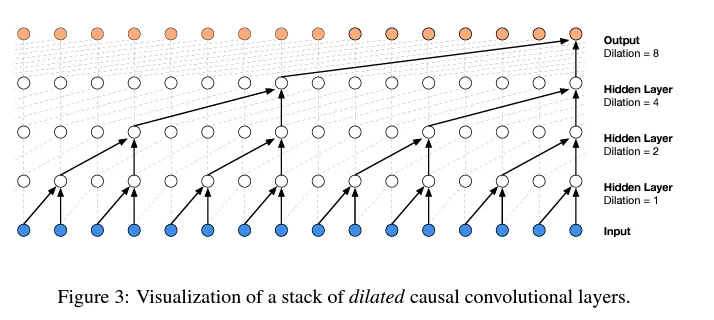

History of LLM Evolution (4): WaveNet — Convolutional Innovation in Sequence Models

Learn the progressive fusion concept of WaveNet and implement a hierarchical tree structure to build deeper language models.

The State of GPT

A structured overview of Andrej Karpathy's Microsoft Build 2023 talk, deeply understanding GPT's training process, development status, the current LLM ecosystem, and future outlook.

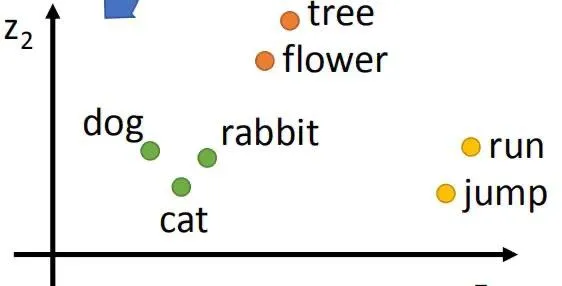

History of LLM Evolution (2): Embeddings — MLPs and Deep Language Connections

Exploring Bengio's classic paper to understand how neural networks learn distributed representations of words and how to build a Neural Probabilistic Language Model (NPLM).

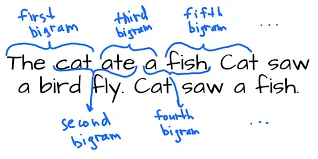

History of LLM Evolution (1): The Simplicity of Bigram

Starting with the simplest Bigram model to explore the foundations of language modeling. Learn how to predict the next character through counting and probability distributions, and how to achieve the same effect using a neural network framework.